“I’ll have you eating out of the palm of my hand” is an unlikely utterance you’ll hear from a robot. Why? Most of them don’t have palms.

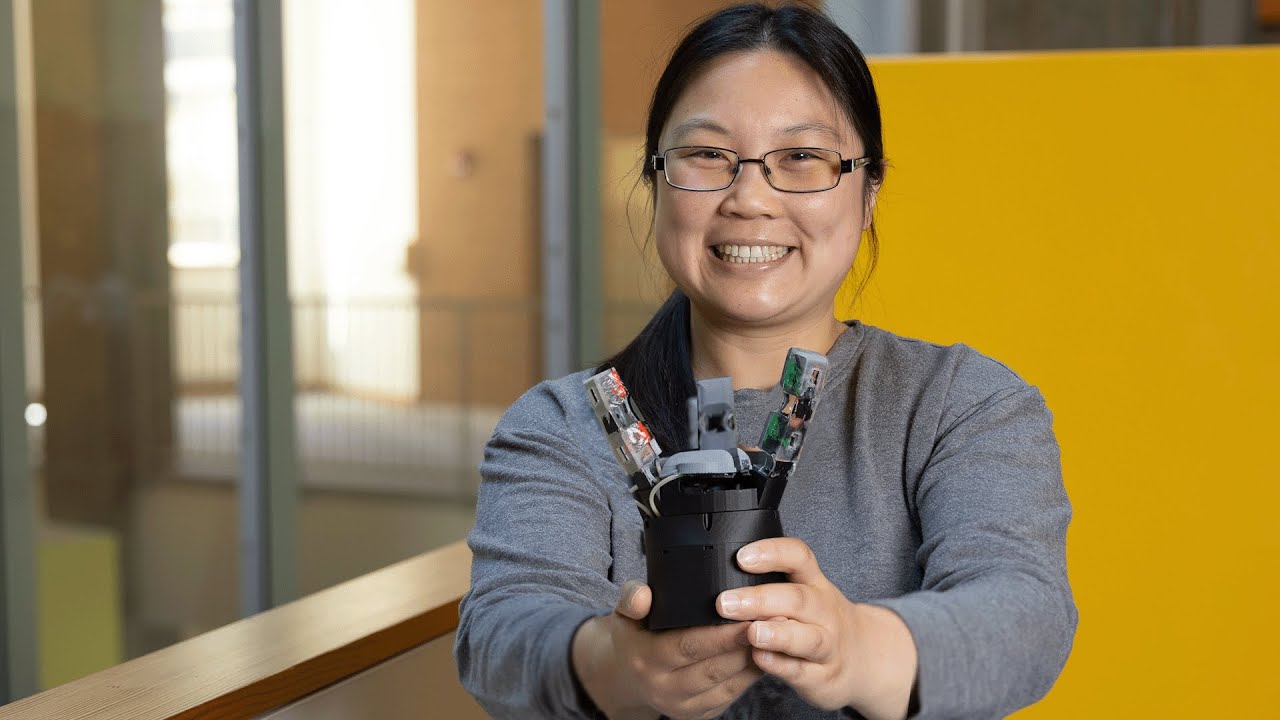

If you have kept up with the protean field, gripping and grasping more like humans has been an ongoing Herculean effort. Now, a new robotic hand design developed in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) has rethought the oft-overlooked palm. The new design uses advanced sensors for a highly sensitive touch, helping the “extremity” handle objects with more detailed and delicate precision.

GelPalm has a gel-based, flexible sensor embedded in the palm, drawing inspiration from the soft, deformable nature of human hands. The sensor uses a special color illumination tech that uses red, green, and blue LEDs to light an object, and a camera to capture reflections. This mixture generates detailed 3D surface models for precise robotic interactions.

GelPalm

Video: MIT CSAIL

And what would the palm be without its facilitative fingers? The team also developed some robotic phalanges, called ROMEO (“RObotic Modular Endoskeleton Optical,” with flexible materials and similar sensing technology as the palm. The fingers have something called “passive compliance,” which is when a robot can adjust to forces naturally, without needing motors or extra control. This in turn helps with the larger objective: increasing the surface area in contact with objects so they can be fully enveloped. Manufactured as single, monolithic structures via 3D printing, the finger designs are a cost-effective production.

Beyond improved dexterity, GelPalm offers safer interaction with objects, something that’s especially handy for potential applications like human-robot collaboration, prosthetics, or robotic hands with human-like sensing for biomedical uses.

Many previous robotic designs have typically focused on enhancing finger dexterity. Liu’s approach shifts the focus to create a more human-like, versatile end effector that interacts more naturally with objects and performs a broader range of tasks.

“We draw inspiration from human hands, which have rigid bones surrounded by soft, compliant tissue,” says recent MIT graduate Sandra Q. Liu SM ’20, PhD ’24, the lead designer of GelPalm, who developed the system as a CSAIL affiliate and PhD student in mechanical engineering. “By combining rigid structures with deformable, compliant materials, we can better achieve that same adaptive talent as our skillful hands. A major advantage is that we don’t need extra motors or mechanisms to actuate the palm’s deformation — the inherent compliance allows it to automatically conform around objects, just like our human palms do so dexterously.”

The researchers put the palm design to the test. Liu compared the tactile sensing performance of two different illumination systems — blue LEDs versus white LEDs — integrated into the ROMEO fingers. “Both yielded similar high-quality 3D tactile reconstructions when pressing objects into the gel surfaces,” says Liu.

But the critical experiment, she says, was to examine how well the different palm configurations could envelop and stably grasp objects. The team got hands-on, literally slathering plastic shapes in paint and pressing them against four palm types: rigid, structurally compliant, gel compliant, and their dual compliant design. “Visually, and by analyzing the painted surface area contacts, it was clear having both structural and material compliance in the palm provided significantly more grip than the others,” says Liu. “It’s an elegant way to maximize the palm’s role in achieving stable grasps.”

One notable limitation is the challenge of integrating sufficient sensory technology within the palm without making it bulky or overly complex. The use of camera-based tactile sensors introduces issues with size and flexibility, the team says, as the current tech doesn’t easily allow for extensive coverage without trade-offs in design and functionality. Addressing this could mean developing more flexible materials for mirrors, and enhancing sensor integration to maintain functionality, without compromising practical usability.

“The palm is almost completely overlooked in the development of most robotic hands,” says Columbia University Associate Professor Matei Ciocarlie, who wasn’t involved in the paper. “This work is remarkable because it introduces a purposefully designed, useful palm that combines two key features, articulation and sensing, whereas most robot palms lack either. The human palm is both subtly articulated and highly sensitive, and this work is a relevant innovation in this direction.”

“I hope we’re moving toward more advanced robotic hands that blend soft and rigid elements with tactile sensitivity, ideally within the next five to 10 years. It’s a complex field without a clear consensus on the best hand design, which makes this work especially thrilling,” says Liu. “In developing GelPalm and the ROMEO fingers, I focused on modularity and transferability to encourage a wide range of designs. Making this technology low-cost and easy to manufacture allows more people to innovate and explore. As just one lab and one person in this vast field, my dream is that sharing this knowledge could spark advancements and inspire others.”

Ted Adelson, the John and Dorothy Wilson Professor of Vision Science in the Department of Brain and Cognitive Sciences and CSAIL member, is the senior author on a paper describing the work. The research was supported, in part, by the Toyota Research Institute, Amazon Science Hub, and the SINTEF BIFROST project. Liu presented the research at the International Conference on Robotics and Automation (ICRA) earlier this month.