Every programming language has two kinds of speed: speed of development, and speed of execution. Python has always favored writing fast versus running fast. Although Python code is almost always fast enough for the task, sometimes it isn’t. In those cases, you need to find out where and why it lags, and do something about it.

A well-respected adage of software development, and engineering generally, is “Measure, don’t guess.” With software, it’s easy to assume what’s wrong, but never a good idea to do so. Statistics about actual program performance are always your best first tool in the pursuit of making applications faster.

The good news is, Python offers a whole slew of packages you can use to profile your applications and learn where it’s slowest. These tools range from simple one-liners included with the standard library to sophisticated frameworks for gathering stats from running applications. Here I cover nine of the most significant, most of which run cross-platform and are readily available either in PyPI or in Python’s standard library.

Time and Timeit

Sometimes all you need is a stopwatch. If all you’re doing is profiling the time between two snippets of code that take seconds or minutes on end to run, then a stopwatch will more than suffice.

The Python standard library comes with two functions that work as stopwatches. The Time module has the perf_counter function, which calls on the operating system’s high-resolution timer to obtain an arbitrary timestamp. Call time.perf_counter once before an action, once after, and obtain the difference between the two. This gives you an unobtrusive, low-overhead—if also unsophisticated—way to time code.

The Timeit module attempts to perform something like actual benchmarking on Python code. The timeit.timeit function takes a code snippet, runs it many times (the default is 1 million passes), and obtains the total time required to do so. It’s best used to determine how a single operation or function call performs in a tight loop—for instance, if you want to determine if a list comprehension or a conventional list construction will be faster for something done many times over. (List comprehensions usually win.)

The downside of Time is that it’s nothing more than a stopwatch, and the downside of Timeit is that its main use case is microbenchmarks on individual lines or blocks of code. These modules only work if you’re dealing with code in isolation. Neither one suffices for whole-program analysis—finding out where in the thousands of lines of code your program spends most of its time.

cProfile

The Python standard library also comes with a whole-program analysis profiler, cProfile. When run, cProfile traces every function call in your program and generates a list of which functions were called most often and how long the calls took on average.

cProfile has three big strengths. One, it’s included with the standard library, so it’s available even in a stock Python installation. Two, it profiles a number of different statistics about call behavior—for instance, it separates out the time spent in a function call’s own instructions from the time spent by all the other calls invoked by the function. This lets you determine whether a function is slow itself or it’s calling other functions that are slow.

Three, and perhaps best of all, you can constrain cProfile freely. You can sample a whole program’s run, or you can toggle profiling on only when a select function runs, the better to focus on what that function is doing and what it is calling. This approach works best only after you’ve narrowed things down a bit, but saves you the trouble of having to wade through the noise of a full profile trace.

Which brings us to the first of cProfile’s drawbacks: It generates a lot of statistics by default. Trying to find the right needle in all that hay can be overwhelming. The other drawback is cProfile’s execution model: It traps every single function call, creating a significant amount of overhead. That makes cProfile unsuitable for profiling apps in production with live data, but perfectly fine for profiling them during development.

For a more detailed rundown of cProfile, see our separate article.

FunctionTrace

FunctionTrace works like cProfile in its general outlines: You pass it the name of the script you want to profile, without having to add instrumentation to the code, and it generates a detailed trace of function calls and memory usage over time. FunctionTrace also handles multithreaded/multiprocess applications without your having to do anything extra. See this article for the technical details behind how FunctionTrace works.

Like cProfile, FunctionTrace does not use sampling; every action is recorded. The profiling components are written in Rust for speed. FunctionTrace’s developers claim the profiling overhead imposed on applications is less than 10%.

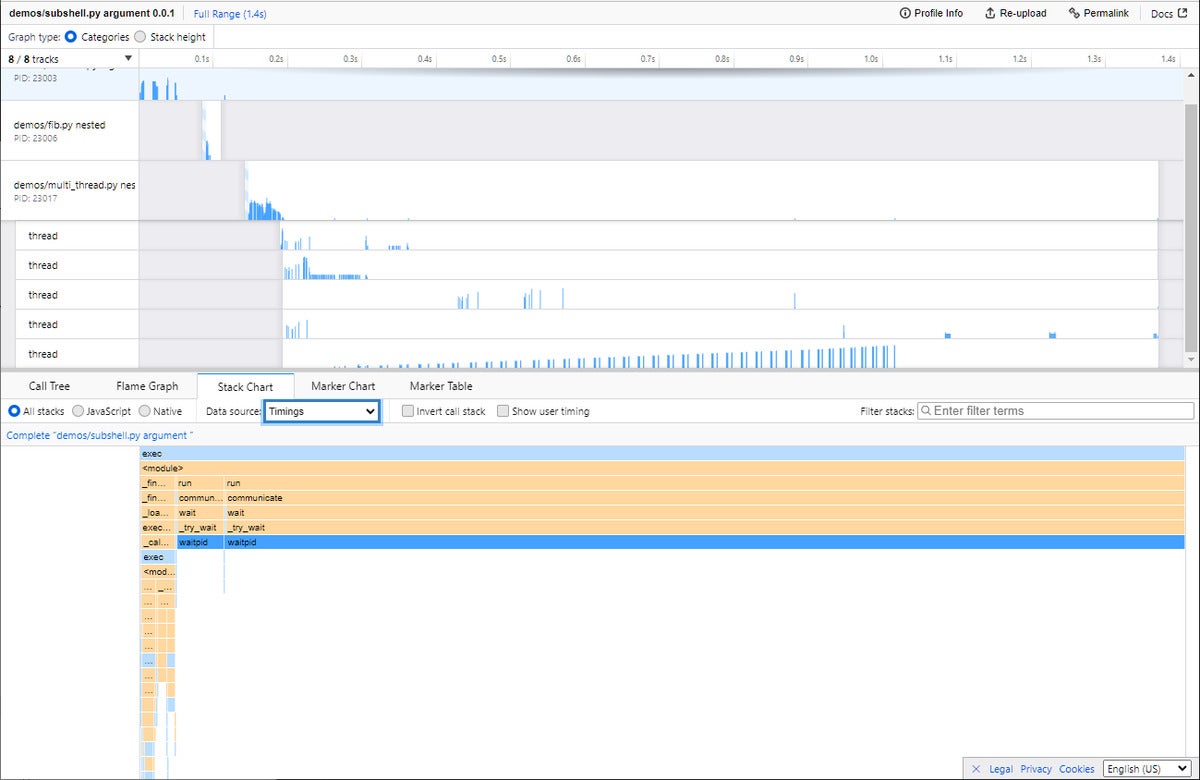

Trace data is saved in JSON format, so you can in theory use any application to parse it. But FunctionTrace’s big advantage is that it uses the Firefox Profiler—which will run in any JavaScript-enabled browser, not just Firefox—to render the results to an interactive graph.

Note that FunctionTrace’s profiling components are not yet available on Windows; profiling can only be performed on Linux or Mac systems.

IDG

IDGFunctionTrace uses the Firefox Profiler (which can run in any JavaScript-enabled browser) to make trace statistics interactive and explorable.