In 2022, malicious emails targeting Pennsylvania county election workers surged around its primary elections on May 17, rising more than 546% in six months. Paired with the potential for nefarious large language models (LLMs) on top of these traditional phishing attacks, there’s a high likelihood that…

Cyber threat prevention ahead of U.S. elections – CyberTalk

Mark Ostrowski is Head of Engineering, U.S. East, for Check Point, a global cyber security company. With over 20 years of experience in IT security, he has helped design and support some of the largest security environments in the country. Mark actively contributes to national and local media, discussing cyber security and its effects in business and at home. He also provides thought leadership for the IT security industry.

In the U.S., election season is underway. In this exclusive interview, Check Point’s Head of Engineering, U.S. East, Mark Ostrowski, discusses disruption, misinformation and more. Explore the challenges. Stand prepared for a season like never before. Don’t miss this!

What kinds of cyber-related election threats are you seeing? What are you seeing in relation to voter data and attempts to steal it, if anything?

A few thoughts as we approach November. Not hearing too much real time chatter on active threats or activity. However, the there has been no slowdown or shortage of ongoing attacks that have been accumulating user credentials and identity information. Only the future will show whether this data will be used in mass during the election cycle.

What to expect as we approach the election? Disruption with DDoS and misinformation on internet based platforms (social media). With the AI evolution, we can also expect more sophisticated campaigns.

What attack surfaces should local governments and state governments strive to protect ahead of the upcoming elections?

State and local governments need to protect all attack surfaces, as any weakness will be exploited to create disruption. These entities should now be exploring what ‘normal’ is and begin to model traffic to identify any anomalies as the election cycle carries on.

How can government agencies work to ensure the security of the election supply chain?

Supply chain security is more critical than ever and all levels of government need to understand from where their vendors’ and partners’ source code, equipment and updates to software derive. Ensuring protection from code to runtime is critical during times of heightened security concerns, as again, any known vulnerability will be exploited.

What measures are government agencies putting in place to protect the integrity of voter registration databases? Or what kinds of software should they have in-place?

Protecting the integrity of voter registration data is a 365 7×24 job and not something that should be overlooked at any time. Wherever there is identity or user data, all layers of preventative security should be in place; network, endpoint, threat hunting activities, ransomware protection, mobile, email security etc… all of these vectors should be secured if the user or application has access to the registration information.

In the event of a cyber attack on an election day, what kinds of contingency plans should local and state governments have in-place to ensure that voting can proceed?

All organizations should have table topped real life scenarios that invoke contingency plans in case there is an active attack on election day. These exercises should include vendors and partners and open lines of communication, accounting for scenarios both as election day approaches and in the days after. These scenarios should not be limited to cyber security alone; they should also include physical security scenarios.

Lastly, subscribe to the CyberTalk.org newsletter for timely insights, cutting-edge analyses and more, delivered straight to your inbox each week.

Game Informer’s Top Scoring Reviews Of 2024

Introduction

Each year, the Game Informer staff reviews a ton of games, but only a select few earn the highest scores. While you can find our full list of reviews here, we know that some people are just looking for the best of the best, so we’ve gathered our top-scoring reviews of 2024 right here. Whether you’re talking hotly anticipated blockbusters like Final Fantasy VII Rebirth and Like a Dragon: Infinite Wealth or surprise hits like Helldivers 2 and Balatro, we have you covered with all the must-play games we’ve reviewed in 2024.

Be sure to bookmark this page and check back frequently, as we’ll continue updating this page as more titles earn review scores on the top end of our review scale!

9.5

9

8.75

8.5

For more of our top reviews from recent years, head to the links below.

YoloBox Ultra Workflow for Funerals and Weddings – Videoguys

Discover the ultimate guide to utilizing the YoloLiv YoloBox Ultra for funeral and wedding filming, as showcased by Danny Dodge. With adept strategies and practical insights, Danny demonstrates how to maximize the potential of the YoloBox Ultra for capturing poignant moments in emotionally charged environments. From strategic camera placements to optimized lighting and audio setups, Danny’s expertise ensures seamless and professional video production, whether in the solemn setting of a funeral or the jubilant atmosphere of a wedding. His innovative approach not only showcases the versatility of the YoloBox Ultra but also offers invaluable guidance for videographers aiming to elevate their craft with empathy and precision.

The YoloBox Ultra is an advanced all-in-one streaming solution that seamlessly combines the features of the YoloBox Pro and Instream into a single, portable device. It boasts impressive versatility, offering four HDMI inputs, three USB inputs, and three simultaneous live IP sources. You can include media assets like video clips, PDFs, and images. The YoloBox Ultra enables you to stream to up to six destinations simultaneously, including popular platforms like Facebook, YouTube, and Twitch. With mission-critical cellular bonding, it ensures reliable streaming even in challenging network conditions. The device utilizes High-Efficiency Video Coding (HEVC) for excellent video quality while reducing bandwidth. Its powerful CPU upgrade provides enhanced performance, and the bright 8-inch display ensures clear visibility. Whether you’re a content creator, live streamer, or professional, the YoloBox Ultra empowers you to elevate your broadcasts and engage your audience effectively.

[embedded content]

HBO’s The Last Of Us Season 2 Has Found Its Manny, Mel, Nora, And Owen

HBO’s The Last of Us Season 2 has found its Manny, Mel, Nora, and Owen, all key characters in the story that unfolds in The Last of Us Part II. Announced today, Danny Ramirez (The Falcon and the Winter Soldier, Top Gun: Maverick) will play Manny, Ariela Barer (Runaways) as Mel, Tati Gabrielle (Chilling Adventures of Sabrina) as Nora, and Spencer Lord (Riverdale) as Owen.

These four actors will join Bella Ramsey and Pedro Pascal, who are returning as Ellie and Joel respectively, alongside new additions like Kaitlyn Dever as Abby, Isabela Merced as Dina, and Young Mazino as Jesse.

[embedded content]

HBO describes Manny as “a loyal soldier whose sunny outlook belies the pain of old wounds and a fear that he will fail his friends when they need him most,” and Mel as “a young doctor whose commitment to having lives is challenged by the realities of war and tribalism,” according to a Variety report.

The streaming service describes Nora as “a military medic struggling to come to terms with the sins of her past,” and Owen as “a gentle soul trapped in a warrior’s body, condemned to fight an enemy he refuses to hate.”

We won’t spoil these characters’ roles in The Last of Us Part II, which is likely to match their roles in HBO’s The Last of Us Season 2, but if you know you know.

Fans of Naughty Dog’s Last of Us series on PlayStation eagerly await the second season of HBO’s The Last of Us. The first season debuted in January of last year, covering the events of the first game and its Left Behind DLC. HBO quickly confirmed the show would be getting a second season, which is set to premiere next year.

We loved the first season and can’t wait to see how it adapts The Last of Us Part II, which got the remaster in January. It seems we aren’t alone either – The Last of Us’ premiere was HBO’s second-largest debut since 2010, and viewers stuck around for the entire season.

The first season of The Last of Us has already won eight Emmy awards. For more, read Game Informer’s review of The Last of Us and then read Game Informer’s review of The Last of Us Part II.

What do you think of these casting choices? Let us know in the comments below!

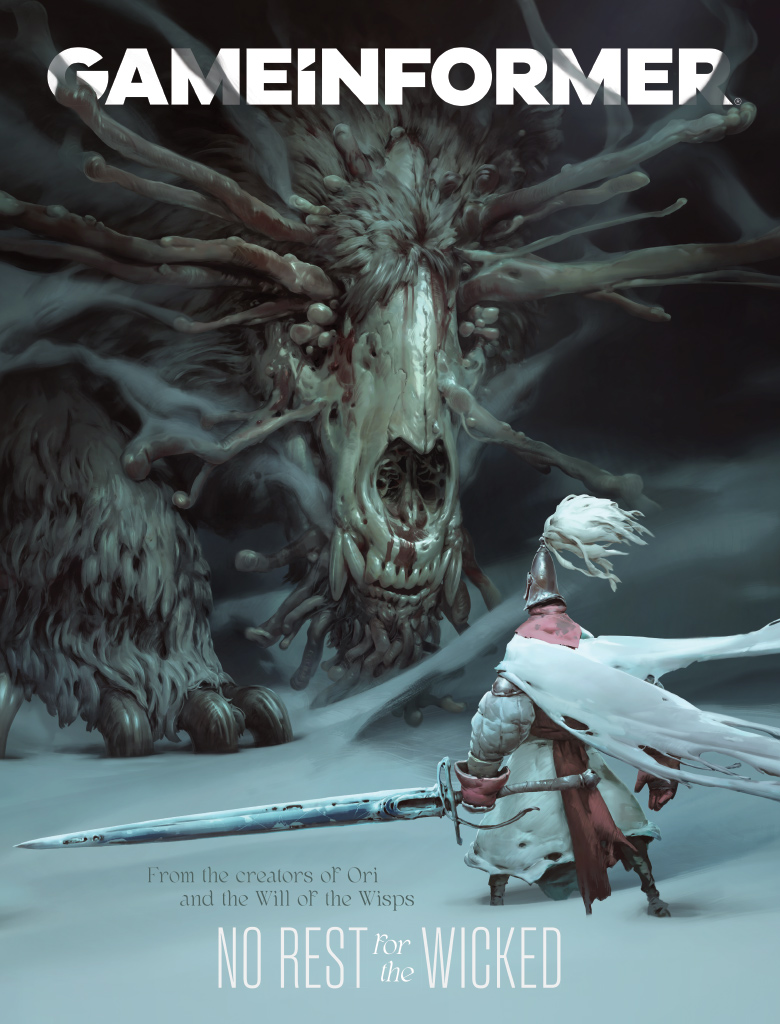

Cover Reveal – No Rest For The Wicked

Moon Studios cemented itself as a premier upstart studio thanks to its stellar work on Ori and the Blind Forest and Ori and the Will of the Wisps. The team is parlaying that success and experience to craft a dramatically different project it’s been dreaming about for years: No Rest for the Wicked. This dark fantasy action RPG combines influences from a variety of genres, from ARPGs like Diablo to the difficulty and thoughtful combat of Dark Souls, to the town-building of Animal Crossing, and more.

We traveled to Vienna, Austria, to visit the home (and Moon’s de facto headquarters) of co-founder Thomas Mahler. Joined by Moon’s other co-founder and CEO, Gennadiy Korol, we went hands-on with No Rest for the Wicked and filled 12 pages with our impressions and exclusive details about the game’s town-building and social features, endgame content, and early access roadmap. We also picked the brains of the two figureheads to learn how the project game to be, what to expect, as well as background on Moon’s history.

Here’s a closer look at this month’s cover.

Issue 364 also includes other great features, such as an eight-page deep-dive on the video game history of Teenage Mutant Ninja Turtles. 2024 marks the 50th anniversary of Dungeons & Dragons, so we have a retrospective of the role-playing game’s colossal influence on the game industry as told by developers. With the well-received launch of Like a Dragon: Infinite Wealth, we caught up with Ryu Ga Gotoku Studio head Masayoshi Yokoyama to discuss the game’s themes, the growing international success of the Like a Dragon franchise, and what comes next. The issue also includes previews for upcoming titles like Indiana Jones and the Great Circle, Silent Hill 2, Death Stranding 2: On the Beach, and more.

You can also try to nab a Game Informer Gold version of the issue. Limited to a numbered print run per issue, this premium version of Game Informer isn’t available for sale. To learn about places where you might be able to get a copy, check out our official Twitter, Facebook, TikTok, Instagram, BlueSky, and Threads accounts and stay tuned for more details in the coming weeks. Click here to read more about Game Informer Gold.

Print subscribers can expect their issues to arrive in the coming weeks. The digital edition launches on March 5 for PC/Mac, iOS, and Google Play. Print copies will be available for purchase in the coming weeks at GameStop.

No Rest for the Wicked Preview – Fantastically Familiar In A Faraway Land – Game Informer

Last month, I traveled to Vienna, Austria, with Game Informer editor Marcus Stewart to visit Ori series developer Moon Studios. It was here that Marcus spoke to Mahler and his fellow Moon co-founder and technical director Gennadiy Korol about No Rest For The Wicked, the action RPG adorning Issue 365 of Game Informer magazine, as you might have just learned if you watched Wicked Inside today.

I watched Marcus play the game for a couple of hours, and while I was there to film for cover story purposes, I’d be lying if I said I didn’t watch with some jealousy. No Rest For The Wicked caught my attention during its debut at The Game Awards 2023 in December, and seeing actual gameplay up close was exciting. It looked great, and judging by Marcus’s smile, it felt great, too.

Though I had to wait a few weeks, I’m happy to say I have finally played No Rest For The Wicked, and as I expected, I love it. And I’m saying that after just a roughly 80-minute preview build that’s apparently just a taste of what’s to come in the full Early Access launch next month.

[embedded content]

After creating my character, I’m introduced to this medieval world with a cutscene that feels ripped out of a great Game of Thrones episode. The king has died, and his close confidant is clearly worried about the future of the kingdom now that the dead king’s son has taken his place; that worry seems justified given the son-now-king immediately calls for a churchly inquisition to a faraway land that’s rumored sick with a plague. Hard cut to Wesley, my created character with his caricatured limbs that look like a gothic painting that’s begun to melt, who has arrived in said faraway land after a shipwreck. With nothing to work with, I start smashing crates and crabs in search of gear, like a sharp weapon and some armor.

Immediately, I’m struck by the painterly art style. It’s clear the team behind Ori and the Blind Forest/Will of the Wisps is the developer, even if the visual technique is different. If the Ori series uses its visuals to paint a whimsical fantasy forest, No Rest For The Wicked uses its visuals to paint a miserable fantasy to exist in. Knowing this game is inspired by the isometric ARPG likes of Blizzard’s Diablo series and From Software’s Dark Souls games, I see the vision.

That vision is even more apparent when I find a pair of daggers and some armor, giving me the confidence to move through this land like a fighter on the up. While the field of view might have you thinking it feels like Diablo, it’s much more in line with the challenge and pace of Dark Souls (Editor’s Note: look, I know it’s a tired comparison, but the game’s leads literally told us From’s games inspired this one).

I time parries to open enemies up to big hits, slash until my stamina runs out, dodge-roll out of attacks I’m not prepared to parry, and spend upwards of 60 seconds on standard mob opponents. It feels fantastic, and that feeling holds up when I pick up a large sword and shield, taking my build from a “normal” one with standard speed to a “heavy” one with slower speed and, of course, the fat roll. I find parrying with the shield much easier. Plus, I can perform standard blocks, which drain stamina but prevent huge hits of damage, something using the dual daggers doesn’t allow me to do – it’s parry or nothing.

Switching between these two builds, each with its own unique attack style, is as simple as pressing left or right on the d-pad. Up allows me to eat something like mushroom soup to restore health, while pressing down consumes a potion to regain poise, stamina, or focus, used for special controller-bumper attacks that saved me more than once. Just as I get confident taking on single enemies without worry, No Rest For The Wicked hits me with a group of three bullying the person I later learn is a blacksmith. Defeating all three takes me a few minutes and a couple of mushroom soups, but the payoff is worth it: a blacksmith to sell items to, repair damaged equipment, and purchase new armor and weapons.

I have plenty to sell because moving through No Rest For The Wicked’s spherical world is a feast for exploration. There might be a chest atop that castle ridge, an item under that sewer tunnel, something atop that fort reachable by climbing vines strewn across its side – the game invites detours from the main path often, and the reward is often worth it. It’s also where I learn Moon uses extra-difficult enemies to gate players off from its expansive world. An axe-wielding foe guards one chest I find after climbing multiple ladders up a fort. His health bar barely moves after a few hits, so I leave and decide that fight is for another day.

My hour or so of exploration and combat practice culminates in the game’s first boss fight: Warrick the Torn. It’s clear Warrick was once a man, but as some locals nearby who let me cook some soup at their bonfire explain, he’s more creature than anything else now. As I creep up toward his area, the ground shakes (and the screen, too) as a terrifying roar spreads through the trees, bristling in the wind. Moon is telling me a boss is coming.

When I step into Warrick’s arena, a quick cutscene reveals his monstrous size. Seconds later, a sword twice my length slashes through me, and my health bar is halved, just like that. Though I get in a few hits, I use this first bout to size Warrick up and determine his attack pattern. He slashes in wide, almost unavoidable swaths, lunges across the arena with his sword in front, and body slams the ground beneath him, but not before jumping up and doing that devilishly evil mid-air pause I know well from my hours with From’s games. That delayed descent is my cause of death, as I don’t take it into account when attempting to dodge-roll out of the way.

Revived at a nearby Cerim Whisper, a blue spin on the Dark Souls bonfire, I return to Warrick once more. This time, I get his health down to a sliver, and he does the same to me – he wins. Third time’s the charm, right? By my third attempt, I have his attacks memorized enough to safely dodge out of the way and counter with quick dual dagger attacks before switching to my sword/shield combo to parry his next wave of hits. A perfect parry leaves Warrick open for multiple hits, and after a few successful blocks, I take him down.

My time ends with a cutscene revealing my journey in this foreign land has only just begun. I know what’s next – I watched Marcus experience it in Mahler’s makeshift Moon headquarters – but this preview build ends here. Fortunately for you, Game Informer has plenty of exclusive details about what’s next in No Rest For The Wicked, and with our digital magazine launch on March 5 (with physical issues arriving in the coming weeks), you don’t have to wait too long to find out.

Microsoft Introduced Copilot for Finance, More AI Tools in Plans for Other Industries – Technology Org

Microsoft unveiled an artificial intelligence tool, Microsoft Copilot for Finance, designed specifically for finance departments of different companies….

The best TV of 2024: top picks for various needs

Are you in the market for a new TV but overwhelmed by the multitude of options? We prepared…

Defenders of Ukraine Notice an Increase of Old Tanks in Russian Forces – Technology Org

Russia is losing thousands of tanks in Ukraine. Different estimates show different results, but the losses of Russian…